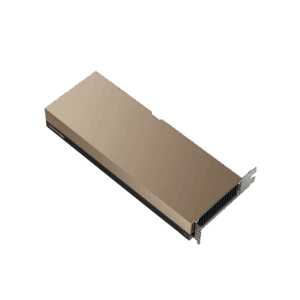

NVIDIA A10

Universal mainstream data center GPU based on Ampere — 24 GB GDDR6, 150W, single-slot. Accelerates graphics, AI inference, virtual workstations, and video in the broadest range of enterprise servers.

🚀 Express Shipping Available Across Europe & MENA Dismiss

Showing 1–16 of 22 results

Universal mainstream data center GPU based on Ampere — 24 GB GDDR6, 150W, single-slot. Accelerates graphics, AI inference, virtual workstations, and video in the broadest range of enterprise servers.

Ampere Tensor Core GPU with 80 GB HBM2e and 2 TB/s memory bandwidth. The proven workhorse for AI training, HPC, and data analytics with Multi-Instance GPU (MIG) for secure partitioning.

Quad-GPU Ampere board purpose-built for high-density, graphics-rich VDI — 64 GB GDDR6 ECC, four GPUs per card, and up to 2x the user density for enterprise virtual desktops.

Low-profile, low-power edge inference GPU with 16 GB GDDR6 ECC in a 40–60W envelope. Brings NVIDIA AI to space- and power-constrained edge and enterprise servers.

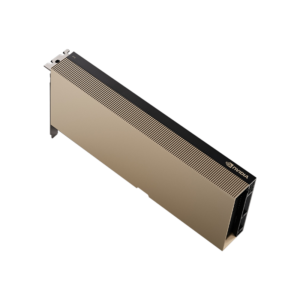

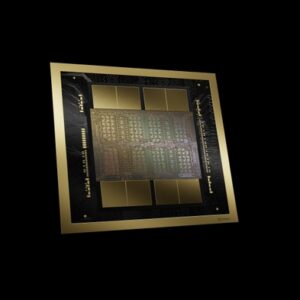

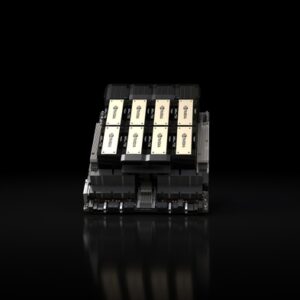

Blackwell architecture flagship — 192GB HBM3e, 8 TB/s bandwidth, up to 18 PFLOPS FP4 sparse per GPU. The next generation of AI training and inference.

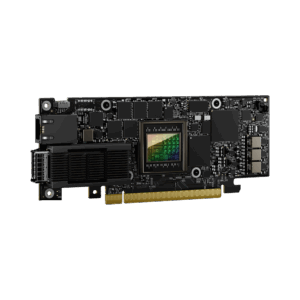

400 Gb/s data processing unit offloading networking, security, and storage from server CPUs. The infrastructure backbone of the modern AI factory.

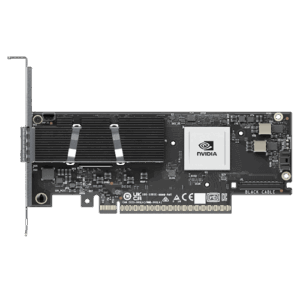

800 Gb/s SuperNIC accelerating East-West GPU traffic in AI factories. Native support for InfiniBand and Ethernet, in-network computing, and PCIe Gen 6.

Enterprise AI factory node — 8x Blackwell Ultra SXM GPUs, 2.1TB GPU memory, 144 PFLOPS FP4, NVLink Switch, in a 10U rack-mount system.

Rack-scale AI factory — 72 Blackwell Ultra GPUs, 36 Grace CPUs, 38TB fast memory, 1.1 exaFLOPS FP4 dense, liquid-cooled for maximum AI reasoning performance.

Gigascale AI factory infrastructure — scalable from 8 to 128+ DGX racks with up to 9,216 GPUs, Quantum-X800 InfiniBand or Spectrum-X Ethernet networking.

Dual-GPU PCIe accelerator with 94 GB HBM3 per card and an NVLink bridge delivering 600 GB/s GPU-to-GPU bandwidth. Purpose-built to supercharge LLM inference in mainstream PCIe servers.

The GPU that launched the AI era — 80GB HBM3, 3.35 TB/s bandwidth, 3,958 TFLOPS FP8, NVLink 900 GB/s in an SXM form factor for HGX and DGX systems.

The first PCIe GPU with HBM3e — 141 GB of memory at 4.8 TB/s. Hopper architecture with NVLink bridge, purpose-built to deploy large language models and generative AI in mainstream enterprise servers.

Hopper architecture with HBM3e — 141GB memory, 4.8 TB/s bandwidth, up to 2x faster LLM inference vs H100. The memory-optimized AI GPU.

Ada Lovelace inference accelerator with 24 GB memory in a compact 72W low-profile, single-slot form factor. Delivers universal acceleration for AI inference, video, and graphics at the edge and in the cloud.

Ada Lovelace universal data center GPU with 48 GB GDDR6 ECC and 18,176 CUDA cores. Purpose-built for VDI, Omniverse digital twins, rendering, and mixed graphics + AI workloads.