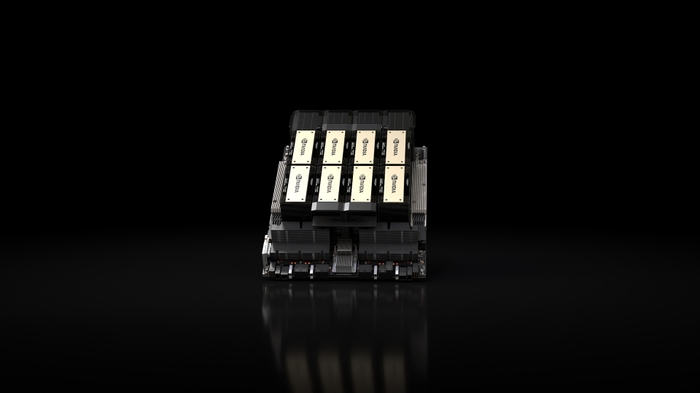

NVIDIA H200 SXM

Hopper architecture with HBM3e — 141GB memory, 4.8 TB/s bandwidth, up to 2x faster LLM inference vs H100. The memory-optimized AI GPU.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA H200 SXM is the memory-enhanced evolution of the H100, featuring 141GB of HBM3e memory — nearly double the capacity of the H100 — with 4.8 TB/s bandwidth. Built on the same proven Hopper architecture, it delivers up to 2x faster inference on large language models like Llama 2 compared to the H100, simply by eliminating memory bottlenecks.

The H200 is the first GPU to feature HBM3e technology, making it the ideal choice for memory-bound AI workloads where model size and batch size are limited by GPU memory capacity.

Key Features

- 141GB HBM3e: Nearly 2x the memory of H100 for larger models and batches

- 4.8 TB/s Bandwidth: 1.4x more bandwidth than H100 for faster data movement

- Up to 2x LLM Inference: Dramatic speedup on memory-bound workloads

- Hopper Compute: Same proven 3,958 TFLOPS FP8 Tensor Core performance

- NVLink 900 GB/s: Full NVLink connectivity for multi-GPU scaling

- Drop-In Upgrade: Software-compatible with H100 deployments

Technical Specifications

| Specification | Details |

|---|---|

| GPU Architecture | NVIDIA Hopper |

| Memory | 141 GB HBM3e |

| Memory Bandwidth | 4.8 TB/s |

| FP32 | 67 TFLOPS |

| FP16/BF16 Tensor | 1,979 TFLOPS |

| FP8 Tensor | 3,958 TFLOPS |

| INT8 Tensor | 3,958 TOPS |

| TDP | Up to 700W (configurable) |

| NVLink | 900 GB/s (4th Gen) |

| PCIe | Gen5 128 GB/s |

| MIG | Up to 7 instances |

| Form Factor | SXM |

Ideal Use Cases

- Large language model inference where memory capacity is the bottleneck

- AI training with larger batch sizes and model parallelism

- Inference serving with higher throughput per GPU

- Drop-in H100 replacement for memory-constrained workloads

Why Choose This Product?

If your AI workloads are limited by GPU memory rather than compute, the H200 delivers the most impactful upgrade. With 141GB of HBM3e and 4.8 TB/s bandwidth, it doubles inference throughput on large models without changing your software stack — making it the fastest path to better AI performance for existing Hopper deployments.

Interested? Contact us for HGX H200 configurations and upgrade planning from H100.

Reviews

There are no reviews yet.