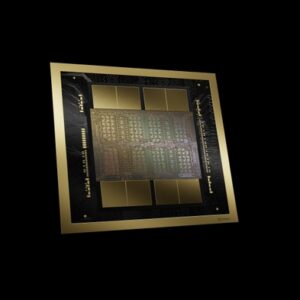

NVIDIA B200

Blackwell architecture flagship — 192GB HBM3e, 8 TB/s bandwidth, up to 18 PFLOPS FP4 sparse per GPU. The next generation of AI training and inference.

🚀 Express Shipping Available Across Europe & MENA Dismiss

Showing all 7 results

Blackwell architecture flagship — 192GB HBM3e, 8 TB/s bandwidth, up to 18 PFLOPS FP4 sparse per GPU. The next generation of AI training and inference.

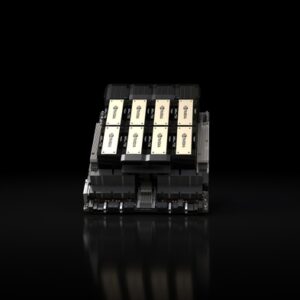

Enterprise AI factory node — 8x Blackwell Ultra SXM GPUs, 2.1TB GPU memory, 144 PFLOPS FP4, NVLink Switch, in a 10U rack-mount system.

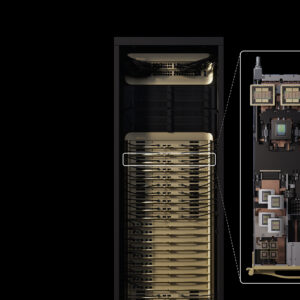

Rack-scale AI factory — 72 Blackwell Ultra GPUs, 36 Grace CPUs, 38TB fast memory, 1.1 exaFLOPS FP4 dense, liquid-cooled for maximum AI reasoning performance.

The ultimate desktop AI supercomputer — GB300 Grace Blackwell Ultra with 20 PFLOPS AI performance, 748GB coherent memory, supporting models up to 1 trillion parameters.

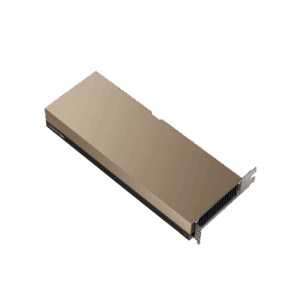

The first PCIe GPU with HBM3e — 141 GB of memory at 4.8 TB/s. Hopper architecture with NVLink bridge, purpose-built to deploy large language models and generative AI in mainstream enterprise servers.

Hopper architecture with HBM3e — 141GB memory, 4.8 TB/s bandwidth, up to 2x faster LLM inference vs H100. The memory-optimized AI GPU.

A new class of NVIDIA GPU purpose-built for massive-context inference. Rubin CPX accelerates million-token reasoning, video understanding, and long-context agents with disaggregated inference design.