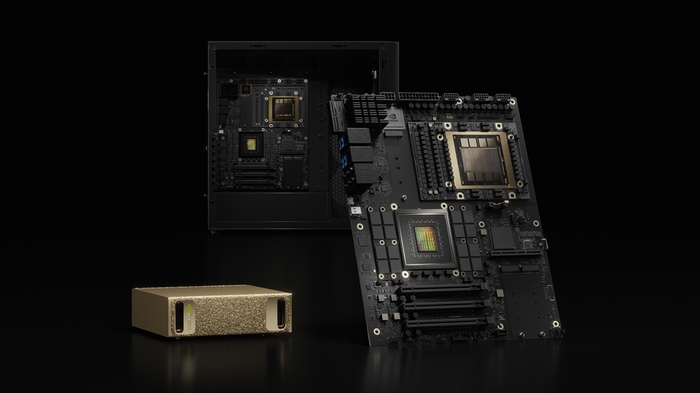

NVIDIA DGX Station

The ultimate desktop AI supercomputer — GB300 Grace Blackwell Ultra with 20 PFLOPS AI performance, 748GB coherent memory, supporting models up to 1 trillion parameters.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA DGX Station is the most powerful desktop AI system ever built. Powered by the NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip, it delivers 20 petaFLOPS of AI performance with 748GB of coherent memory — enabling researchers and developers to train, fine-tune, and run inference on AI models with up to 1 trillion parameters, all from their desk.

The GB300 Desktop Superchip features a Blackwell Ultra GPU with latest-generation Tensor Cores connected to a 72-core NVIDIA Grace CPU via NVLink-C2C at 900 GB/s. With 252GB of HBM3e GPU memory and 496GB of LPDDR5X CPU memory in a single coherent address space, DGX Station eliminates the memory walls that constrain AI development.

Key Features

- 20 PFLOPS AI Performance: GB300 Grace Blackwell Ultra Desktop Superchip

- 748GB Coherent Memory: 252GB HBM3e (7.1 TB/s) + 496GB LPDDR5X (396 GB/s)

- 1 Trillion Parameter Models: Train and infer on the largest AI models locally

- ConnectX-8 SuperNIC: Up to 800 Gb/s Ethernet networking

- MIG Support: Partition into up to 7 isolated GPU instances

- 72-Core Grace CPU: Neoverse V2 cores for balanced CPU+GPU workloads

- Desktop Form Factor: No data center required

Technical Specifications

| Specification | Details |

|---|---|

| Processor | NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip |

| GPU | NVIDIA Blackwell Ultra |

| CPU | 72-core NVIDIA Grace (Neoverse V2) |

| GPU Memory | 252 GB HBM3e (7.1 TB/s) |

| CPU Memory | 496 GB LPDDR5X (396 GB/s) |

| Total Coherent Memory | 748 GB |

| Interconnect | NVLink-C2C (900 GB/s) |

| FP4 Tensor (Sparse) | 153 PFLOPS |

| FP4 Tensor (Dense) | 20 PFLOPS |

| FP8 Tensor | 10 PFLOPS |

| Networking | ConnectX-8 SuperNIC (2x QSFP112 400GbE), 10GbE, 1GbE BMC |

| Storage | 4x M.2 Gen 5 NVMe slots |

| PCIe | 1x Gen 5 x16, 2x Gen 5 x16 (x8 electrical) |

| Power | 1,600W |

| MIG | Up to 7 instances |

Ideal Use Cases

- Large-scale AI model training and fine-tuning at the desktop

- AI research on frontier models with trillion-parameter scale

- Multi-user AI development via MIG partitioning (up to 7 isolated instances)

- Enterprise AI labs and centers of excellence

- Agentic AI and multi-step reasoning development

Why Choose This Product?

DGX Station is the data center in a box. With 748GB of coherent memory and 20 PFLOPS of AI compute, it handles workloads that previously required a server rack — in a desktop form factor. For AI research labs, enterprise development teams, and organizations that need to train and deploy the largest models with full data sovereignty, DGX Station is the ultimate investment.

Interested? Contact us for pricing, deployment planning, and multi-station configurations.

Reviews

There are no reviews yet.