NVIDIA H100 NVL

Dual-GPU PCIe accelerator with 94 GB HBM3 per card and an NVLink bridge delivering 600 GB/s GPU-to-GPU bandwidth. Purpose-built to supercharge LLM inference in mainstream PCIe servers.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

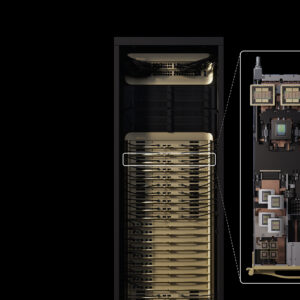

The NVIDIA H100 NVL is a dual-GPU PCIe accelerator purpose-built to supercharge large language model (LLM) inference on mainstream enterprise servers. Based on the NVIDIA Hopper architecture with built-in Transformer Engine, H100 NVL delivers up to 5x the LLM inference performance of the prior-generation NVIDIA A100 system, making it the ideal platform for deploying ChatGPT-class models at scale.

With 94 GB of HBM3 memory per GPU, 3.9 TB/s bandwidth, and an NVLink bridge connecting both cards at 600 GB/s, H100 NVL removes memory and interconnect bottlenecks that slow down generative AI workloads in standard PCIe systems.

Key Features

- Purpose-Built for LLMs: Up to 5x faster inference than A100 on GPT-3 175B and similar models.

- NVLink Bridge: 600 GB/s GPU-to-GPU bandwidth connecting the dual-card pair.

- 94 GB HBM3: Industry-leading memory capacity for a PCIe form factor.

- Transformer Engine: FP8 precision for dramatic LLM training and inference speedup.

- NVIDIA AI Enterprise: Five-year subscription bundled in.

Technical Specifications

| Specification | Details |

|---|---|

| GPU Architecture | NVIDIA Hopper |

| GPU Memory | 94 GB HBM3 |

| Memory Bandwidth | 3.9 TB/s |

| FP64 / FP64 Tensor Core | 30 TFLOPS / 60 TFLOPS |

| FP32 | 60 TFLOPS |

| TF32 Tensor Core | 835 TFLOPS (with sparsity) |

| BFLOAT16 / FP16 Tensor Core | 1,671 TFLOPS (with sparsity) |

| FP8 Tensor Core | 3,341 TFLOPS (with sparsity) |

| INT8 Tensor Core | 3,341 TOPS |

| Max Thermal Design Power (TDP) | 350–400W (configurable) |

| Interconnect | NVLink bridges, PCIe Gen5 |

| Form Factor | Dual PCIe dual-slot air cooled |

Ideal Use Cases

- Large language model (LLM) inference and serving

- Generative AI deployment in standard PCIe servers

- Fine-tuning foundation models on enterprise data

- HPC and scientific computing workloads

- Mixed AI and HPC data center consolidation

Why Choose This Product?

H100 NVL is the PCIe-form alternative to the HGX H100 platform, giving enterprises access to Hopper-class LLM performance in mainstream 2U and 4U servers. Contact us for configuration guidance, NVLink bridge pairing, and volume discounts on multi-node deployments.

Interested? Contact us for personalized pricing and configuration options.

Reviews

There are no reviews yet.