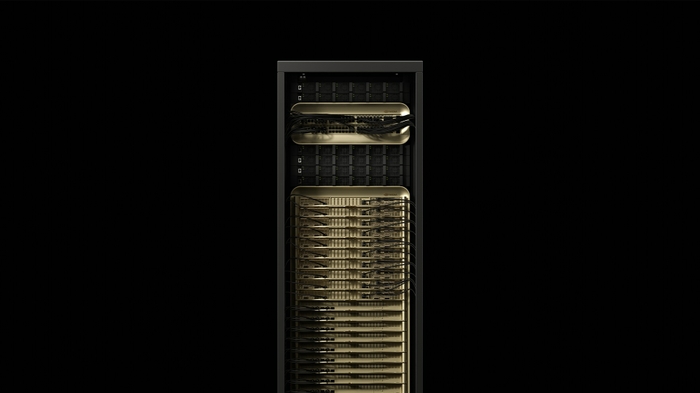

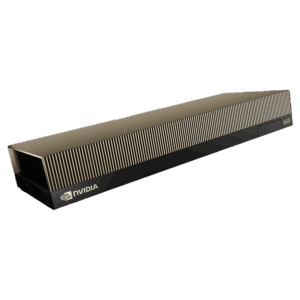

NVIDIA DGX GB300 NVL72

Rack-scale AI factory — 72 Blackwell Ultra GPUs, 36 Grace CPUs, 38TB fast memory, 1.1 exaFLOPS FP4 dense, liquid-cooled for maximum AI reasoning performance.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA DGX GB300 NVL72 is a fully liquid-cooled, rack-scale AI system that integrates 72 NVIDIA Blackwell Ultra GPUs and 36 NVIDIA Grace CPUs into a single, unified computing platform. Delivering 1.1 exaFLOPS of dense FP4 compute with 38TB of fast memory, it represents the highest-performance AI system available for enterprises.

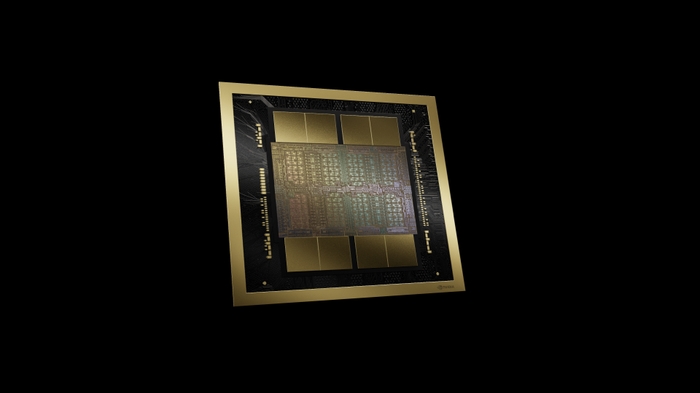

Purpose-built for test-time scaling inference and AI reasoning, the DGX GB300 NVL72 delivers up to 50x overall increase in AI factory output compared to Hopper-based platforms. The 72 GPUs are connected via fifth-generation NVLink with 130 TB/s aggregate bandwidth, creating a single massive shared memory space for the largest AI models.

Key Features

- 1.1 exaFLOPS FP4 Dense: The highest-performance AI system available

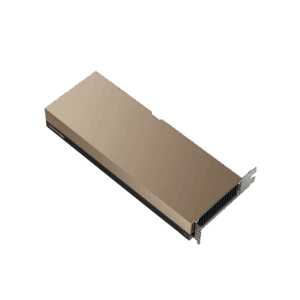

- 72 Blackwell Ultra GPUs + 36 Grace CPUs: Unified rack-scale compute

- 38TB Fast Memory: Massive shared memory space via NVLink

- 130 TB/s NVLink Bandwidth: 72-GPU all-to-all connectivity

- Liquid-Cooled: Maximum performance density with efficient cooling

- 50x Hopper Performance: Generational leap in AI factory throughput

Technical Specifications

| Specification | Details |

|---|---|

| GPUs | 72x NVIDIA Blackwell Ultra |

| CPUs | 36x NVIDIA Grace |

| Fast Memory | 38 TB |

| AI Performance (FP4 Dense) | 1.1 exaFLOPS |

| NVLink Bandwidth | 130 TB/s aggregate |

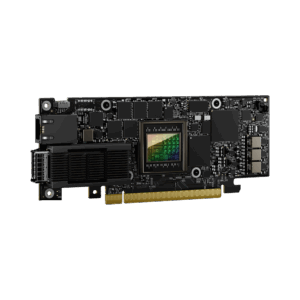

| Networking | Quantum-X800 InfiniBand or Spectrum-X Ethernet, ConnectX-8 SuperNICs |

| Cooling | Fully liquid-cooled |

| Form Factor | Rack-scale system |

Ideal Use Cases

- Training frontier AI models — trillion+ parameter LLMs and multimodal models

- AI reasoning and agentic AI systems with test-time scaling

- Real-time video generation from world foundation models

- Large-scale enterprise AI factory deployments

- National AI research infrastructure

Why Choose This Product?

The DGX GB300 NVL72 is the most powerful AI system available for enterprises and research institutions. At 1.1 exaFLOPS with 38TB of shared memory, it handles the largest and most complex AI workloads — from training frontier models to powering real-time AI reasoning at scale. For organizations building the future of AI, this is the infrastructure that makes it possible.

Interested? Contact us for infrastructure planning, data center requirements, and enterprise pricing.

Reviews

There are no reviews yet.