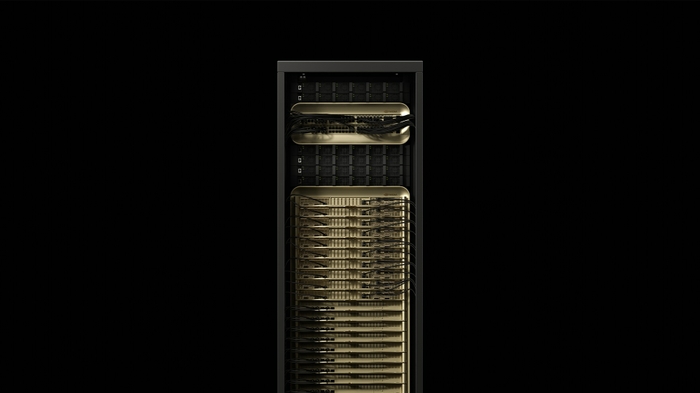

NVIDIA DGX B300

Enterprise AI factory node — 8x Blackwell Ultra SXM GPUs, 2.1TB GPU memory, 144 PFLOPS FP4, NVLink Switch, in a 10U rack-mount system.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

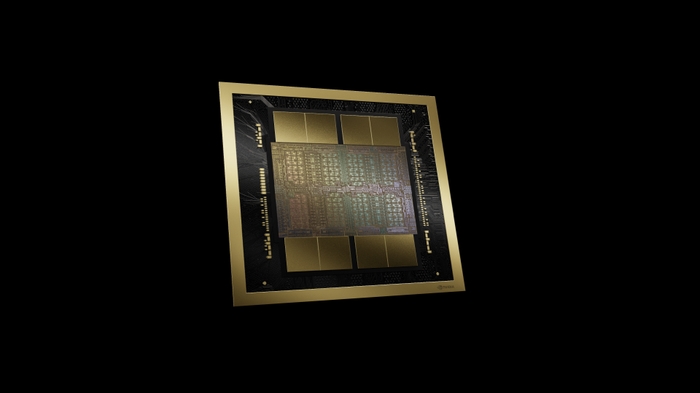

The NVIDIA DGX B300 is a data center-scale AI system built for enterprise AI factories. Featuring 8 NVIDIA Blackwell Ultra SXM GPUs with 2.1TB of total GPU memory and 144 PFLOPS of FP4 sparse performance, it is the building block for large-scale AI training, post-training, and inference infrastructure.

Connected via fifth-generation NVLink with 14.4 TB/s aggregate bandwidth and equipped with NVIDIA ConnectX-8 VPI networking at 800 Gb/s per port, the DGX B300 delivers the performance and connectivity needed for the most demanding AI workloads — from training frontier LLMs to powering real-time AI reasoning systems.

Key Features

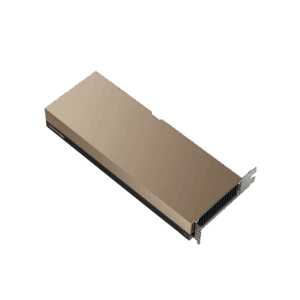

- 8x Blackwell Ultra SXM GPUs: Maximum AI compute density per node

- 2.1TB GPU Memory: HBM3e for training the largest models

- 144 PFLOPS FP4 Sparse: Breakthrough AI training and inference performance

- NVLink Switch System: 14.4 TB/s aggregate GPU-to-GPU bandwidth

- 800 Gb/s Networking: 8x ConnectX-8 VPI + 2x BlueField-3 DPU

- Intel Xeon 6776P CPU: Enterprise-grade host processing

Technical Specifications

| Specification | Details |

|---|---|

| GPUs | 8x NVIDIA Blackwell Ultra SXM |

| CPU | Intel Xeon 6776P |

| GPU Memory | 2.1 TB HBM3e |

| AI Performance (FP4 Sparse) | 144 PFLOPS |

| AI Performance (FP4 Dense) | 108 PFLOPS |

| AI Performance (FP8) | 72 PFLOPS |

| NVLink Bandwidth | 14.4 TB/s aggregate |

| Networking | 8x OSFP (ConnectX-8 VPI 800Gb/s), 2x QSFP112 (BlueField-3 DPU 400Gb/s) |

| OS Storage | 2x 1.9 TB NVMe M.2 |

| Data Storage | 8x 3.84 TB NVMe E1.S |

| Power | ~14 kW |

| Form Factor | 10U rack-mount |

Ideal Use Cases

- Enterprise AI model training at scale — LLMs, multimodal models, foundation models

- AI factory infrastructure for continuous training and inference pipelines

- High-performance computing (HPC) for scientific simulation and research

- Multi-tenant AI platforms via MIG and virtualization

- Agentic AI and reasoning systems requiring massive compute

Why Choose This Product?

The DGX B300 is the enterprise building block for AI at scale. Each node delivers 144 PFLOPS of AI compute with 2.1TB of GPU memory, and multiple DGX B300 systems scale seamlessly into DGX SuperPOD configurations for the largest AI workloads on the planet. For enterprises building private AI factories, DGX B300 is the proven foundation.

Interested? Contact us for infrastructure planning, multi-node configurations, and enterprise pricing.

Reviews

There are no reviews yet.