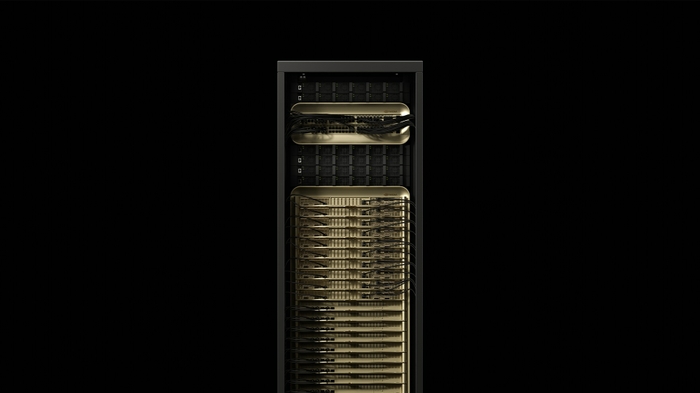

NVIDIA DGX SuperPOD

Gigascale AI factory infrastructure — scalable from 8 to 128+ DGX racks with up to 9,216 GPUs, Quantum-X800 InfiniBand or Spectrum-X Ethernet networking.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA DGX SuperPOD is the full-stack reference architecture for building gigascale AI infrastructure. Built on scalable units of 8 DGX systems, it enables rapid deployment of AI factories that can scale from dozens to thousands of GPUs — connected via NVIDIA Quantum-X800 InfiniBand or Spectrum-X Ethernet networking.

Configurable with DGX GB300 or DGX B300 systems, DGX SuperPOD provides a proven blueprint for deploying leadership-class AI infrastructure on-premises, in colocation facilities, or in hybrid configurations. It is the architecture powering the world’s most advanced AI research labs and enterprise AI operations.

Key Features

- Scalable Units: Each SU contains 8 DGX systems, scale to 128+ racks

- Up to 9,216 GPUs: Massive compute for the largest AI training runs

- Quantum-X800 InfiniBand: Ultra-low latency inter-node networking

- Spectrum-X Ethernet: Alternative Ethernet-based networking option

- Full-Stack Blueprint: Compute, networking, storage, and management

- NVIDIA Mission Control: AI infrastructure management at scale

Configuration Options

| Configuration | Details |

|---|---|

| Compute Nodes | DGX GB300 NVL72 or DGX B300 |

| Scalable Unit (SU) | 8 DGX systems per SU |

| Maximum Scale | 128+ racks, 9,216+ GPUs |

| Networking Options | Quantum-X800 InfiniBand or Spectrum-X Ethernet |

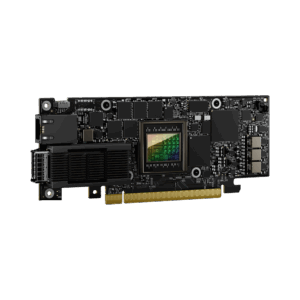

| NICs | ConnectX-8 SuperNICs |

| Management | NVIDIA Mission Control |

| Storage | Partner-provided (leading storage providers) |

| Deployment | On-premises, colocation, or hybrid |

Ideal Use Cases

- Enterprise AI factories for continuous model training and deployment

- Hyperscale AI training for frontier foundation models

- National AI research and sovereign AI infrastructure

- Multi-tenant AI platforms serving hundreds of concurrent workloads

- Scientific computing and large-scale simulation clusters

Why Choose This Product?

DGX SuperPOD is the proven blueprint for building AI factories at any scale. Whether you need a single scalable unit or hundreds of racks, the architecture provides a tested path from initial deployment to exascale AI computing. For enterprises and institutions committed to AI leadership, DGX SuperPOD is the infrastructure that delivers.

Interested? Contact us for AI factory planning, sizing guidance, and partner ecosystem introductions.

Reviews

There are no reviews yet.