NVIDIA A100 80GB

Ampere Tensor Core GPU with 80 GB HBM2e and 2 TB/s memory bandwidth. The proven workhorse for AI training, HPC, and data analytics with Multi-Instance GPU (MIG) for secure partitioning.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA A100 80GB is the foundational GPU of the NVIDIA Ampere architecture that established GPU computing as the standard for AI and HPC workloads. With 80GB of HBM2e memory delivering over 2 TB/s bandwidth, Multi-Instance GPU (MIG) support for up to 7 isolated instances, and broad ecosystem support, the A100 remains one of the most widely deployed data center GPUs worldwide.

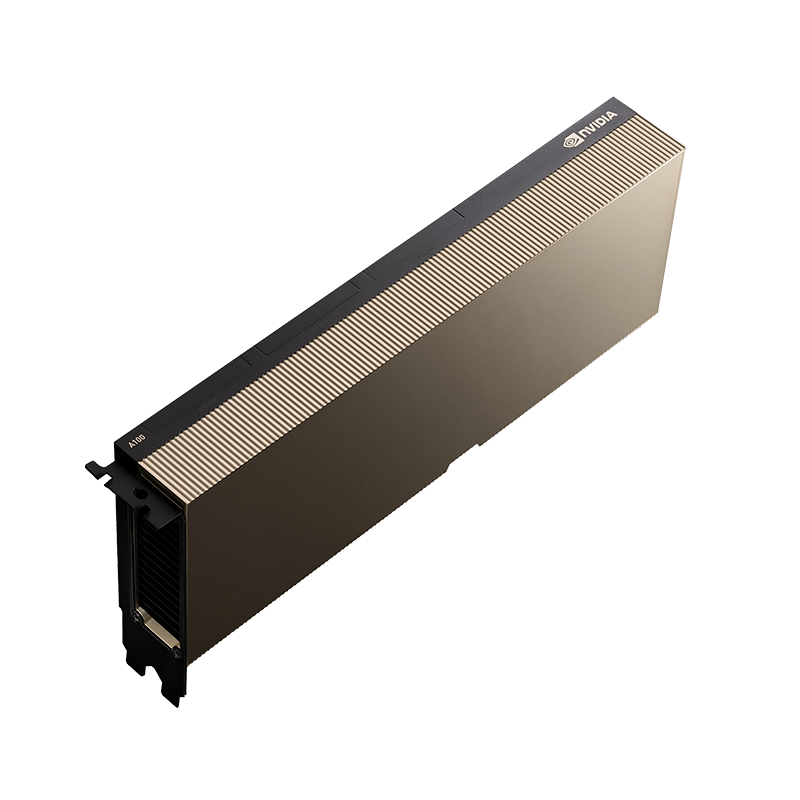

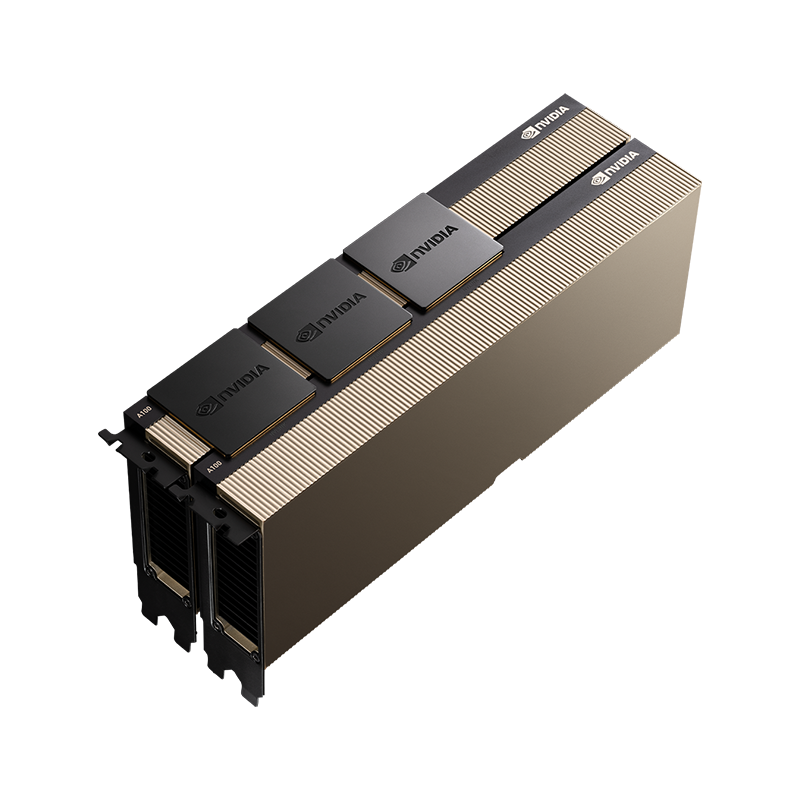

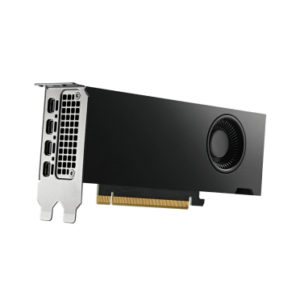

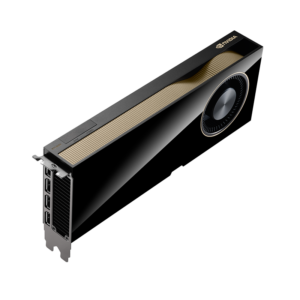

Available in both SXM and PCIe form factors, the A100 provides flexible deployment options for every data center environment. Its structural sparsity support delivers up to 2x performance acceleration, and its third-generation Tensor Cores with TF32 precision deliver 6x the performance of the previous-generation V100 for AI training.

Key Features

- 80GB HBM2e: Over 2 TB/s bandwidth for memory-intensive workloads

- MIG: Up to 7 isolated GPU instances for multi-tenant efficiency

- Third-Gen Tensor Cores: TF32, FP16, BF16, INT8, and FP64 precision support

- Structural Sparsity: Up to 2x performance boost for sparse models

- Dual Form Factors: SXM (700W, NVLink) and PCIe (300W) options

- Proven Ecosystem: Broadest software and ISV support

Technical Specifications

| Specification | SXM | PCIe |

|---|---|---|

| GPU Architecture | NVIDIA Ampere | |

| Memory | 80 GB HBM2e | |

| Memory Bandwidth | 2,039 GB/s | 2,039 GB/s |

| FP32 | 19.5 TFLOPS | 19.5 TFLOPS |

| TF32 Tensor | 312 TFLOPS (Sparse) | 312 TFLOPS (Sparse) |

| FP16/BF16 Tensor | 624 TFLOPS (Sparse) | 624 TFLOPS (Sparse) |

| INT8 Tensor | 1,248 TOPS (Sparse) | 1,248 TOPS (Sparse) |

| FP64 Tensor | 19.5 TFLOPS | 19.5 TFLOPS |

| TDP | 400W | 300W |

| NVLink | 600 GB/s | — |

| PCIe | Gen4 | Gen4 |

| MIG | Up to 7 instances | |

Ideal Use Cases

- Production AI training and inference with proven reliability

- High-performance computing and scientific simulation

- Multi-tenant cloud GPU services via MIG

- Data analytics acceleration with RAPIDS and Spark

- Organizations expanding existing A100-based infrastructure

Why Choose This Product?

The A100 is the most battle-tested data center GPU in production today. With the broadest software ecosystem, proven reliability across thousands of deployments, and strong cost-performance characteristics, it remains an excellent choice for organizations that need dependable AI infrastructure without the power and cooling demands of newer architectures.

Interested? Contact us for DGX A100, HGX A100, and PCIe server configurations.

Reviews

There are no reviews yet.