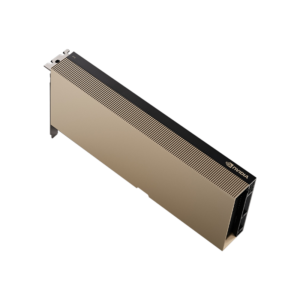

NVIDIA A10

Universal mainstream data center GPU based on Ampere — 24 GB GDDR6, 150W, single-slot. Accelerates graphics, AI inference, virtual workstations, and video in the broadest range of enterprise servers.

🚀 Express Shipping Available Across Europe & MENA Dismiss

Showing all 13 results

Universal mainstream data center GPU based on Ampere — 24 GB GDDR6, 150W, single-slot. Accelerates graphics, AI inference, virtual workstations, and video in the broadest range of enterprise servers.

Ampere Tensor Core GPU with 80 GB HBM2e and 2 TB/s memory bandwidth. The proven workhorse for AI training, HPC, and data analytics with Multi-Instance GPU (MIG) for secure partitioning.

Quad-GPU Ampere board purpose-built for high-density, graphics-rich VDI — 64 GB GDDR6 ECC, four GPUs per card, and up to 2x the user density for enterprise virtual desktops.

Low-profile, low-power edge inference GPU with 16 GB GDDR6 ECC in a 40–60W envelope. Brings NVIDIA AI to space- and power-constrained edge and enterprise servers.

Industrial-grade edge AI developer kit with Ampere iGPU, optional RTX A6000/6000 Ada dGPU, up to 1,705 TOPS, ConnectX-7 200GbE, 10-year lifecycle support.

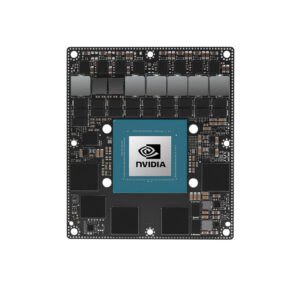

High-performance Jetson module delivering 200 TOPS of AI compute with 1792 CUDA cores and 32GB LPDDR5 — ideal for robotics, edge AI, and autonomous systems.

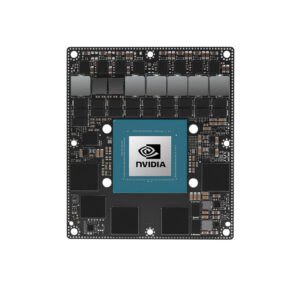

The most powerful Jetson module with 275 TOPS AI performance, 2048 CUDA cores, and 64GB LPDDR5 memory — built for advanced robotics, autonomous machines, and industrial edge AI.

The ultimate Jetson development platform with 275 TOPS, 64GB memory, and a feature-rich carrier board — prototype and validate your most demanding edge AI applications.

The most affordable Jetson Orin module with 34 TOPS AI performance, 512 CUDA cores, and 4GB LPDDR5 — designed for cost-sensitive edge AI products at scale.

Entry-level AI powerhouse with up to 67 TOPS performance, 1024 CUDA cores, and 8GB LPDDR5 — the most accessible path to deploying generative AI at the edge.

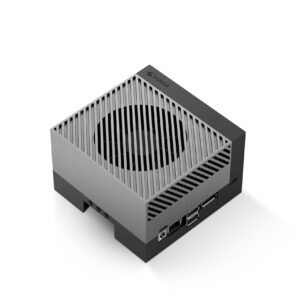

The most affordable generative AI computer — 67 TOPS performance with a complete carrier board, ready to run LLMs and vision AI models out of the box for just $249.

Compact powerhouse delivering 157 TOPS of AI performance with 1024 CUDA cores and 16GB LPDDR5 in a small SO-DIMM form factor — perfect for space-constrained edge AI deployments.

Cost-effective edge AI module with 117 TOPS performance, 1024 CUDA cores, and 8GB LPDDR5 in a compact SO-DIMM form factor for scalable deployments.