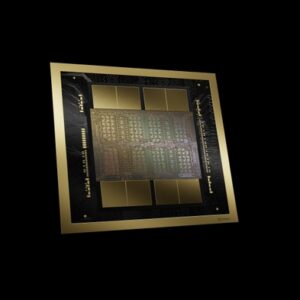

NVIDIA H200 NVL

The first PCIe GPU with HBM3e — 141 GB of memory at 4.8 TB/s. Hopper architecture with NVLink bridge, purpose-built to deploy large language models and generative AI in mainstream enterprise servers.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA H200 NVL supercharges generative AI and high-performance computing (HPC) workloads with the world’s most advanced PCIe accelerator. Based on the NVIDIA Hopper architecture, H200 NVL is the first GPU to feature HBM3e memory, delivering 141 GB of ultra-fast memory at 4.8 TB/s — 1.4x more memory and 1.4x more bandwidth than the H100 NVL.

Designed for mainstream enterprise servers, H200 NVL pairs with an NVIDIA NVLink bridge to connect up to four GPUs with 900 GB/s of GPU-to-GPU bandwidth, making it the ideal choice for deploying large language models (LLMs) such as Llama 3 70B and mixture-of-experts models in standard PCIe racks.

Key Features

- First HBM3e PCIe GPU: 141 GB of HBM3e with 4.8 TB/s bandwidth — nearly double the capacity of H100 NVL.

- NVLink Bridge: 900 GB/s GPU-to-GPU interconnect across up to 4 GPUs.

- Transformer Engine: FP8 precision acceleration for LLM training and inference.

- Second-Generation MIG: Up to 7 secure GPU instances of 18 GB each.

- NVIDIA AI Enterprise: Five-year subscription included with every H200 NVL.

Technical Specifications

| Specification | Details |

|---|---|

| GPU Architecture | NVIDIA Hopper |

| GPU Memory | 141 GB HBM3e |

| Memory Bandwidth | 4.8 TB/s |

| FP64 / FP64 Tensor Core | 34 TFLOPS / 67 TFLOPS |

| FP32 | 67 TFLOPS |

| FP8 / INT8 Tensor Core | 3,958 TFLOPS (with sparsity) |

| Max Thermal Design Power (TDP) | Up to 600W (configurable) |

| Multi-Instance GPU (MIG) | Up to 7 MIGs @ 18 GB each |

| Interconnect | NVIDIA NVLink (900 GB/s), PCIe Gen5 (128 GB/s) |

| Form Factor | PCIe dual-slot air cooled |

| Decoders | 7 NVDEC, 7 JPEG |

Ideal Use Cases

- Generative AI inference for large language models (LLMs) such as Llama, Mixtral, and Falcon

- Fine-tuning and customization of foundation models on enterprise data

- Retrieval-augmented generation (RAG) pipelines

- High-performance computing and scientific simulation

- Mainstream enterprise server deployments without SXM baseboard requirements

Why Choose This Product?

H200 NVL delivers the performance and memory capacity needed for production generative AI in standard PCIe servers, eliminating the need for specialized HGX platforms. Talk to our specialists about single-node and multi-node configurations, NVLink bridging, AI Enterprise licensing, and integration with your existing rack infrastructure.

Interested? Contact us for personalized pricing and configuration options.

Reviews

There are no reviews yet.