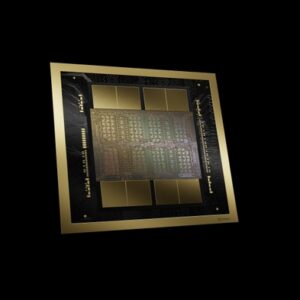

NVIDIA B200

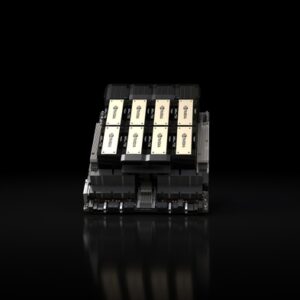

Blackwell architecture flagship — 192GB HBM3e, 8 TB/s bandwidth, up to 18 PFLOPS FP4 sparse per GPU. The next generation of AI training and inference.

🚀 Express Shipping Available Across Europe & MENA Dismiss

Showing all 3 results

Blackwell architecture flagship — 192GB HBM3e, 8 TB/s bandwidth, up to 18 PFLOPS FP4 sparse per GPU. The next generation of AI training and inference.

The GPU that launched the AI era — 80GB HBM3, 3.35 TB/s bandwidth, 3,958 TFLOPS FP8, NVLink 900 GB/s in an SXM form factor for HGX and DGX systems.

Hopper architecture with HBM3e — 141GB memory, 4.8 TB/s bandwidth, up to 2x faster LLM inference vs H100. The memory-optimized AI GPU.