NVIDIA Jetson AGX Thor: Everything You Need to Know About the Blackwell Edge AI Platform

The NVIDIA Jetson AGX Thor marks the arrival of the Blackwell architecture at the edge. With 2,070 TFLOPS of FP4 sparse AI performance, a 14-core Arm Neoverse-V3AE CPU, and 128GB of LPDDR5X memory, it delivers 7.5x more AI compute and 3.5x better energy efficiency than the Jetson AGX Orin, redefining what’s possible for edge AI.

In this deep dive, we explore the architecture, specifications, and real-world implications of this next-generation platform.

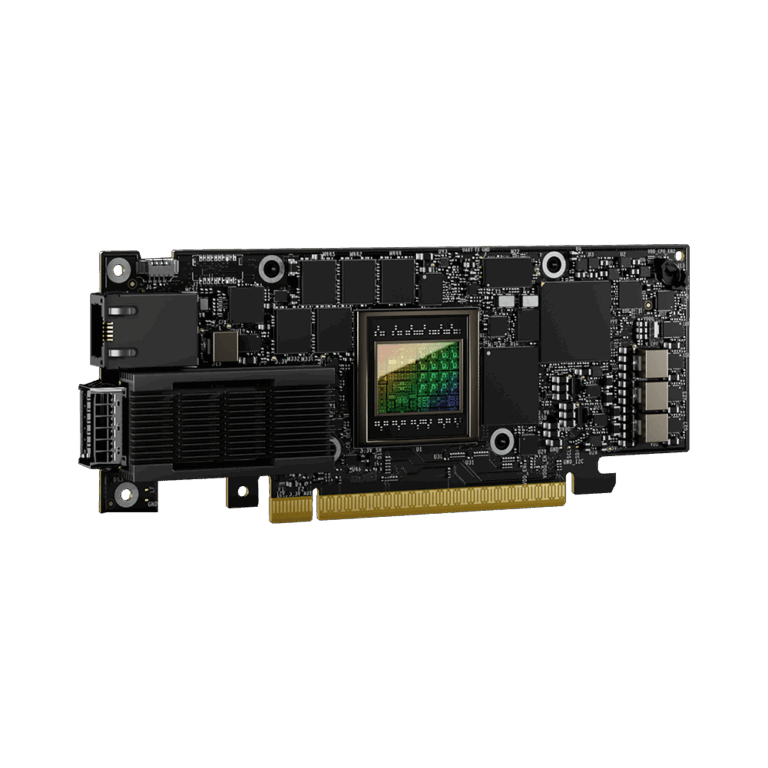

Blackwell Comes to the Edge

Until now, NVIDIA’s Blackwell architecture has been associated with data center GPUs like the B200. The Jetson AGX Thor brings that same architectural DNA, fifth-generation Tensor Cores, native FP4 quantization, and a next-generation Transformer Engine, to a module that runs on 40 to 130 watts.

This is significant because FP4 support means models that previously required FP8 or FP16 precision can now run at half the memory footprint and higher throughput. Combined with 128GB of memory, the Thor can run large vision-language models and generative AI directly at the edge, no cloud connection required.

Key Specifications

| Specification | AGX Thor | AGX Orin 64GB | Improvement |

|---|---|---|---|

| GPU Architecture | Blackwell | Ampere | 2 gen leap |

| GPU Cores | 2,560 CUDA | 2,048 CUDA | 1.25x |

| AI Performance | 2,070 TFLOPS (FP4) | 275 TOPS (INT8) | ~7.5x |

| CPU | 14-core Neoverse-V3AE | 12-core Cortex-A78AE | New arch |

| Memory | 128GB LPDDR5X | 64GB LPDDR5 | 2x |

| Memory Bandwidth | 273 GB/s | 204.8 GB/s | 1.33x |

| Power | 40–130W | 15–60W | Higher ceiling |

The CPU Upgrade: Neoverse V3AE

The move from Cortex-A78AE to Neoverse-V3AE is more than an incremental improvement. The V3AE is a server-class core design adapted for edge deployment, it brings substantially higher single-thread performance, wider execution pipelines, and improved branch prediction. The 14-core configuration at 2.6 GHz provides CPU headroom that was a bottleneck in some Orin deployments.

Developer Kit Details

The Jetson AGX Thor Developer Kit ships as a compact board (243 × 112 × 57 mm) with an extensive I/O complement:

- Networking: 5GbE RJ45 + QSFP28 (4x 25GbE) for high-bandwidth sensor data

- Display: HDMI 2.0b + DisplayPort 1.4a for development and debugging

- USB: 2x USB-A 3.2 Gen2 + 2x USB-C 3.1

- Storage: 1TB NVMe on PCIe Gen5 x4

- Expansion: M.2 Key M (PCIe Gen5) + M.2 Key E (PCIe Gen5) for WiFi/BT

- Industrial: 2x CAN headers for automotive and industrial buses

- Vision: PVA v3 dedicated vision accelerator + HSB camera support

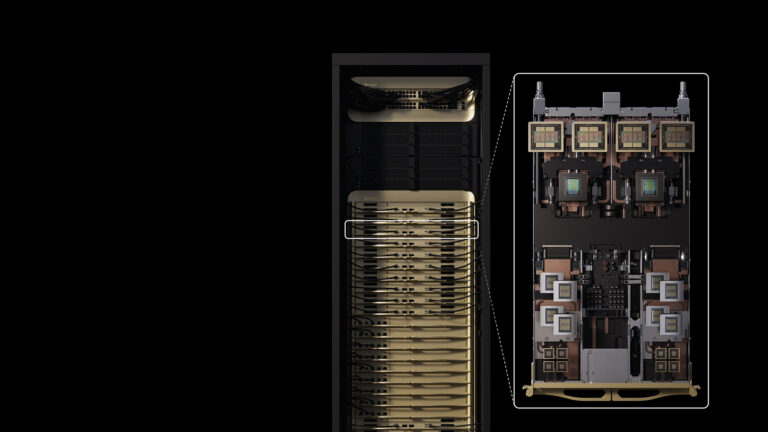

Multi-Instance GPU at the Edge

Thor introduces MIG (Multi-Instance GPU) support with 10 TPCs at the edge, a first for the Jetson platform. This allows a single Thor module to be partitioned into isolated GPU instances, each running independent AI workloads. For multi-function edge devices that need to run safety-critical and non-critical models in isolation, this is a breakthrough capability.

Who Is Thor For?

The Jetson AGX Thor is designed for applications that demand data center-class AI at the edge:

- Humanoid Robots: Real-time perception, planning, and control with multiple concurrent AI models

- Autonomous Vehicles: Multi-sensor fusion with LiDAR, radar, and camera at highway speeds

- Medical Robotics: AI-assisted surgical navigation with functional safety considerations

- Industrial Automation: Complex multi-stage quality inspection pipelines

The Bottom Line

The Jetson AGX Thor doesn’t just improve on the AGX Orin, it fundamentally changes the scale of AI that’s possible at the edge. With 2,070 TFLOPS, 128GB of memory, and MIG support, it enables edge AI applications that were previously cloud-only. For teams building the next generation of autonomous systems, Thor is the platform to develop on.

Ready to explore Jetson AGX Thor for your project? Contact us for developer kit availability and integration support.