Best GPU for AI Training in 2026: H100 vs H200 vs B200 Compared

The data center GPU market has never been more competitive, or more confusing. With three generations of NVIDIA training GPUs available simultaneously, choosing the right accelerator for your AI workload requires understanding what each generation brings to the table.

In this guide, we compare the NVIDIA H100, H200, and B200 across every metric that matters: raw compute, memory capacity, bandwidth, power efficiency, and real-world AI training performance. Whether you’re building your first GPU cluster or planning a multi-million dollar AI factory, this comparison will help you make the right call.

The Three Contenders at a Glance

| Specification | H100 SXM | H200 SXM | B200 |

|---|---|---|---|

| Architecture | Hopper | Hopper | Blackwell |

| GPU Memory | 80 GB HBM3 | 141 GB HBM3e | 192 GB HBM3e |

| Memory Bandwidth | 3.35 TB/s | 4.8 TB/s | 8 TB/s |

| FP8 Tensor | 3,958 TFLOPS | 3,958 TFLOPS | ~9,000 TFLOPS |

| FP32 | 67 TFLOPS | 67 TFLOPS | ~75 TFLOPS |

| NVLink | 900 GB/s | 900 GB/s | 1,800 GB/s |

| TDP | 700W | 700W | 1,000W |

| Transistors | 80B | 80B | 208B |

Memory: The Silent Bottleneck

Memory capacity is often the first constraint in AI training. When your model doesn’t fit in GPU memory, you resort to model parallelism, gradient checkpointing, or offloading, all of which reduce training efficiency.

The H100’s 80GB handles most models up to ~30B parameters comfortably on a single GPU. For larger models, you need multi-GPU parallelism.

The H200’s 141GB, nearly double the H100, is a game-changer for memory-bound workloads. It lets you run larger batch sizes, fit bigger models per GPU, and reduce the degree of parallelism needed. NVIDIA reports up to 2x faster LLM inference on H200 vs H100, primarily because more of the model fits in memory.

The B200’s 192GB pushes this further. Combined with 8 TB/s bandwidth (2.4x the H100), it handles the largest models and datasets with minimal memory pressure.

Compute: Hopper vs Blackwell

The H100 and H200 share the same Hopper compute die, identical TFLOPS at every precision level. The H200’s advantage is purely memory: more capacity, more bandwidth.

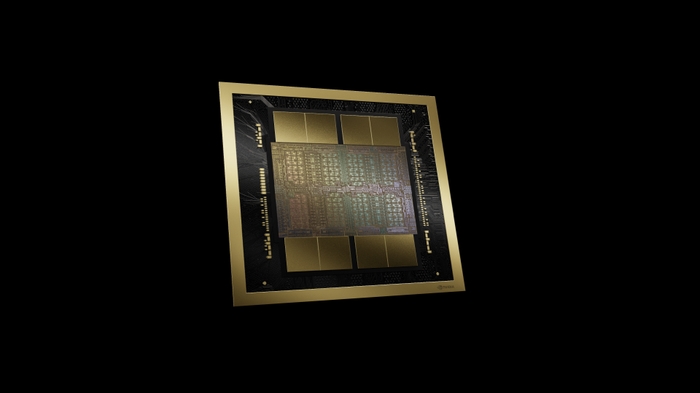

The B200, built on Blackwell, introduces a new compute tier. With 208 billion transistors in a dual-die design and fifth-generation Tensor Cores supporting native FP4 precision, the B200 delivers approximately 2.5x the FP8 compute of the H100 and introduces an entirely new FP4 tier at ~18 PFLOPS (sparse) per GPU.

For AI training, this translates to roughly 4x faster training throughput per GPU compared to the H100 on large language models.

Interconnect: Scaling Across GPUs

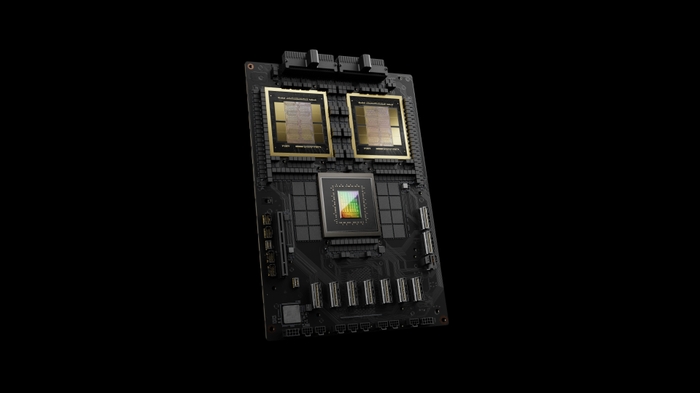

NVLink bandwidth determines how efficiently your GPUs communicate during distributed training. The B200’s fifth-generation NVLink at 1.8 TB/s (2x the H100/H200) enables tighter multi-GPU coupling, which is especially important for large-model training where all-reduce operations dominate training time.

The B200 also supports NVLink domains of up to 576 GPUs, versus the H100/H200’s more limited topology, enabling more efficient scaling for the largest training runs.

Power and Efficiency

The B200’s 1,000W TDP is significantly higher than the H100/H200’s 700W. But when normalized for training throughput, the B200 delivers substantially more performance per watt, NVIDIA claims 25x better energy efficiency for AI inference compared to Hopper.

For data center planners, this means the B200 requires more power per GPU but fewer total GPUs to achieve the same training throughput, potentially reducing overall infrastructure costs.

When to Choose Each GPU

Choose the H100 if:

- You need proven, battle-tested hardware with the broadest software ecosystem

- Your workloads are compute-bound rather than memory-bound

- Budget optimization is critical and H100 pricing has become favorable

- You’re expanding an existing H100-based cluster

Choose the H200 if:

- Your workloads are memory-bound (large models, large batch inference)

- You want a drop-in H100 upgrade with no software changes

- LLM inference throughput is your primary metric

- You need 141GB per GPU without moving to Blackwell’s power requirements

Choose the B200 if:

- You’re building new infrastructure and want the highest performance per GPU

- You’re training frontier models where every TFLOP matters

- Your data center can accommodate 1,000W per GPU cooling and power

- You need FP4 precision support for next-generation efficient training

The Bottom Line

There is no single “best” GPU, only the best GPU for your specific workload, budget, and infrastructure constraints. The H100 remains the proven workhorse, the H200 is the smart upgrade for memory-hungry workloads, and the B200 is the performance king for those ready to invest in Blackwell infrastructure.

Not sure which GPU fits your AI training workload? Contact our team for a personalized recommendation based on your model size, training budget, and data center capabilities.