NVIDIA B200 Deep Dive: Inside the Blackwell Architecture for Data Centers

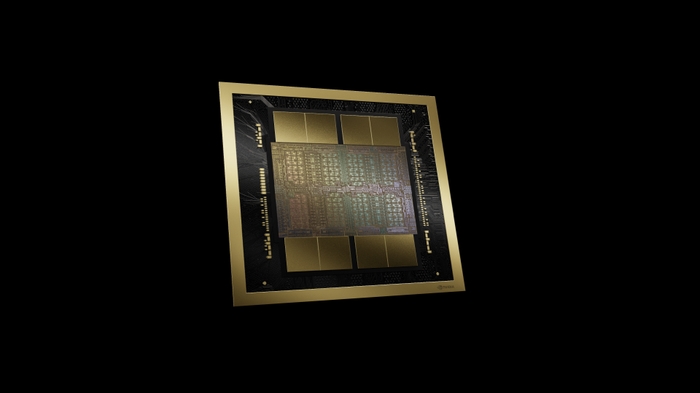

The NVIDIA B200 is the most advanced data center GPU ever built. At 208 billion transistors across two reticle-limited dies connected by a 10 TB/s chip-to-chip interconnect, it represents a fundamental architectural leap from the Hopper generation. But raw transistor counts don’t tell the full story.

In this deep dive, we explore the Blackwell architecture innovations that make the B200 the engine of the next generation of AI factories.

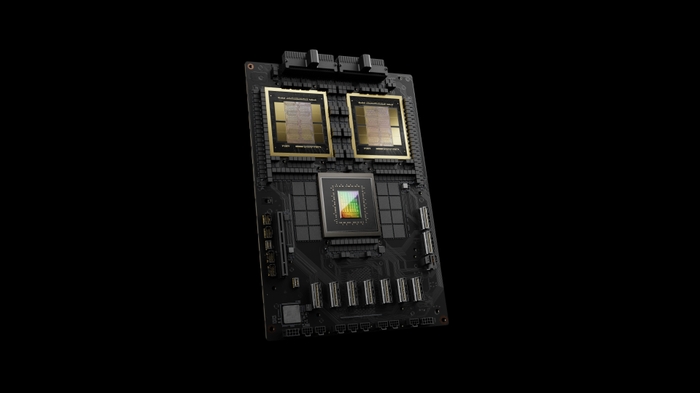

Dual-Die Architecture

The B200 is too large for a single reticle, so NVIDIA builds it as two dies connected by a custom high-bandwidth interface (NV-HBI) running at 10 TB/s. This chip-to-chip link is so fast that software sees the B200 as a single monolithic GPU. There’s no programmer-visible seam between the two dies.

This dual-die approach lets NVIDIA pack 208 billion transistors, 2.6x more than the H100’s 80 billion, while maintaining high manufacturing yields. It’s the same conceptual approach AMD uses with chiplets, but optimized for the extreme bandwidth requirements of AI workloads.

Fifth-Generation Tensor Cores and FP4

The B200’s Tensor Cores introduce native FP4 (4-bit floating point) precision. This matters because modern AI models can often be quantized to FP4 with minimal accuracy loss, effectively doubling the compute throughput compared to FP8.

At FP4 with sparsity, a single B200 delivers approximately 18 PFLOPS, roughly 4.5x the H100’s FP8 performance. This is the single biggest driver of the B200’s training speedup.

Memory: 192GB HBM3e at 8 TB/s

The B200 features 192GB of HBM3e memory with 8 TB/s bandwidth, 2.4x more bandwidth than the H100. This is critical for large language model training, where memory bandwidth often determines how quickly model weights and gradients can be moved through the compute pipeline.

The 192GB capacity also means larger model shards per GPU, reducing the degree of parallelism needed and improving overall training efficiency.

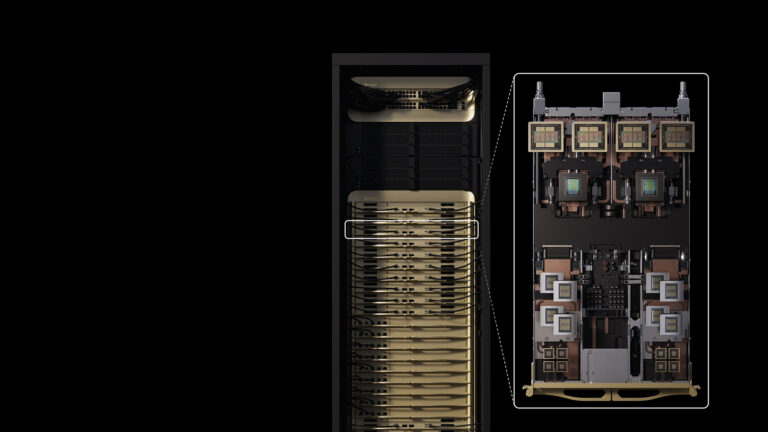

Fifth-Generation NVLink

NVLink 5 delivers 1.8 TB/s of bidirectional bandwidth per GPU, double the H100’s 900 GB/s. In an 8-GPU HGX B200 system, NVLink provides 14.4 TB/s of aggregate bandwidth, turning the 8 GPUs into what effectively behaves as a single massive accelerator.

NVLink 5 also extends to NVLink Switch technology, enabling domains of up to 576 GPUs, meaning multi-rack training runs can communicate at NVLink speeds rather than falling back to slower network fabrics.

Real-World Impact

NVIDIA reports that DGX B200 (8x B200 GPUs) delivers:

- 3x faster training than DGX H100 on large language models

- 15x faster inference on real-time AI reasoning workloads

- Significantly better energy efficiency per token generated

Who Should Consider the B200?

The B200 is designed for organizations building new AI infrastructure at scale. If you’re planning a GPU cluster purchase in 2026, the B200 offers the best performance per GPU and the most efficient architecture for large-scale training. For existing H100 users, the upgrade path is compelling, but requires planning for the higher per-GPU power draw (1,000W vs 700W).

Planning your next AI infrastructure investment? Contact us for HGX B200 configurations, data center requirements, and deployment planning.