NVIDIA B200

Blackwell architecture flagship — 192GB HBM3e, 8 TB/s bandwidth, up to 18 PFLOPS FP4 sparse per GPU. The next generation of AI training and inference.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA B200 is the flagship data center GPU built on the NVIDIA Blackwell architecture. With 192GB of HBM3e memory, 8 TB/s bandwidth, and up to 18 PFLOPS of FP4 sparse performance per GPU, it represents a generational leap over the Hopper H100 — delivering 4x faster AI training and up to 15x faster inference.

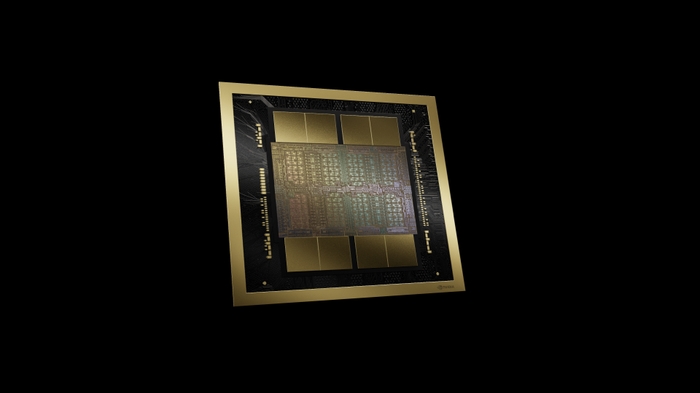

Built from two reticle-limited dies connected by a 10 TB/s chip-to-chip interconnect with 208 billion transistors on TSMC 4NP, the B200 introduces fifth-generation NVLink at 1.8 TB/s and native FP4 precision support through fifth-generation Tensor Cores, enabling unprecedented AI compute density.

Key Features

- Up to 18 PFLOPS FP4 Sparse: Fifth-generation Tensor Cores with FP4 precision

- 192GB HBM3e: Massive memory for training the largest AI models

- 8 TB/s Memory Bandwidth: 2.4x more than H100 for faster data throughput

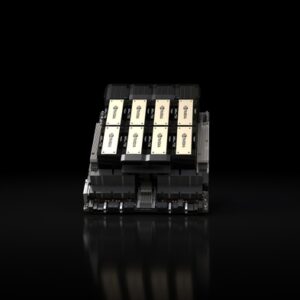

- NVLink 1.8 TB/s: Fifth-generation NVLink for 576-GPU scaling

- 208B Transistors: Dual-die design on TSMC 4NP process

- Second-Gen Transformer Engine: Enhanced AI model acceleration

Technical Specifications

| Specification | Details |

|---|---|

| GPU Architecture | NVIDIA Blackwell |

| Transistors | 208 billion |

| Memory | 192 GB HBM3e |

| Memory Bandwidth | 8 TB/s |

| FP4 Tensor (Sparse) | ~18 PFLOPS |

| FP8 Tensor (Sparse) | ~9 PFLOPS |

| FP32 | ~75 TFLOPS |

| NVLink | 1.8 TB/s (5th Gen) |

| PCIe | Gen5 |

| TDP | Up to 1,000W |

| Form Factor | SXM |

Ideal Use Cases

- Next-generation AI model training — trillion+ parameter foundation models

- High-throughput AI inference with FP4 precision for production deployment

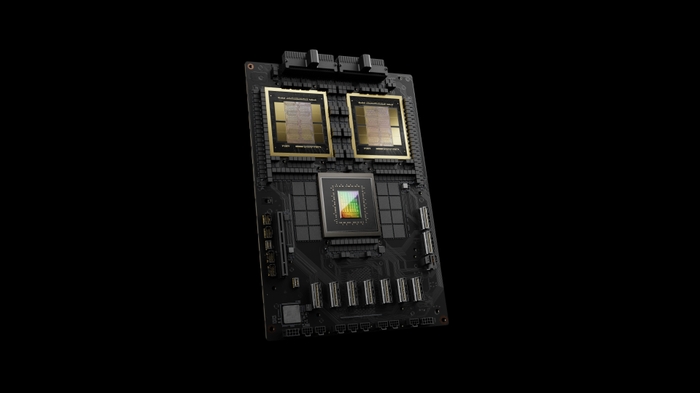

- Enterprise AI factory infrastructure (HGX B200 with 8 GPUs)

- Scientific computing and HPC with massive memory requirements

Why Choose This Product?

The B200 is the compute engine of the Blackwell generation — delivering a generational leap in AI performance per GPU. For organizations building new AI infrastructure or planning their next hardware refresh, the B200 provides the highest performance-per-watt and performance-per-dollar in the data center GPU market.

Interested? Contact us for HGX B200 configurations, data center planning, and enterprise pricing.

Reviews

There are no reviews yet.