How to Set Up NVIDIA DGX Spark for Local LLM Development

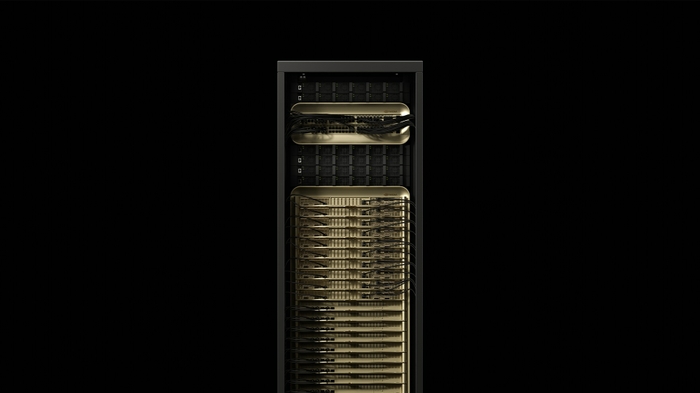

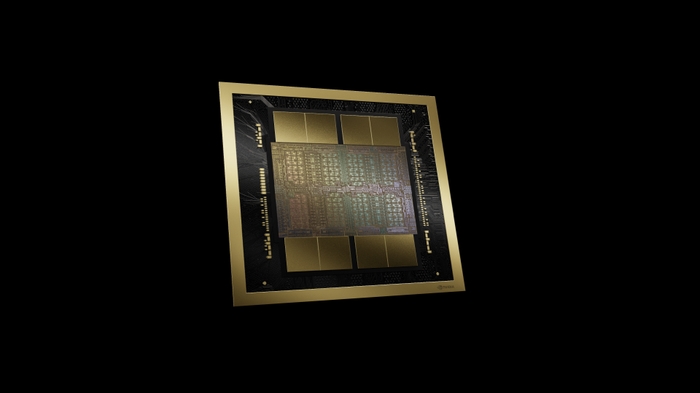

The NVIDIA DGX Spark puts a 1 PFLOPS AI supercomputer on your desk. With 128GB of unified memory and the GB10 Grace Blackwell Superchip, it can run inference on models up to 200 billion parameters and fine-tune models up to 70 billion parameters, all locally.

This guide walks you through the setup process and gets you running your first large language model.

Hardware Setup

The DGX Spark is remarkably simple to set up physically:

- Place the unit on a stable, well-ventilated surface. At 150 × 150 × 50 mm, it fits anywhere

- Connect Ethernet via the 10GbE RJ45 port (or use Wi-Fi 7 for wireless setup)

- Connect a display via HDMI 2.1a for initial setup (optional, you can also SSH in)

- Connect keyboard/mouse via USB-C if using the display

- Connect the 240W power supply, the system boots automatically

First Boot and DGX OS

DGX Spark comes preloaded with NVIDIA DGX OS, a purpose-built Linux distribution optimized for AI development. The NVIDIA AI software stack is pre-installed, including:

- CUDA toolkit and drivers

- cuDNN, TensorRT, and NCCL libraries

- NVIDIA Container Toolkit (Docker with GPU support)

- Python environment with key AI packages

On first boot, follow the on-screen prompts to configure your user account, network settings, and timezone.

Verify the System

# Check GPU status

nvidia-smi

# Verify CUDA

nvcc --version

# Check available memory (should show ~128GB)

free -h

# Test Docker GPU access

docker run --rm --gpus all nvidia/cuda:12.0-base nvidia-smiRunning Your First LLM

The fastest way to run an LLM on DGX Spark is using NVIDIA’s NIM (NVIDIA Inference Microservice) containers:

# Pull and run Llama 3.1 8B via NIM

docker run -d --gpus all -p 8000:8000 -e NGC_API_KEY=your_key_here nvcr.io/nim/meta/llama-3.1-8b-instruct:latest

# Test inference

curl -X POST http://localhost:8000/v1/chat/completions -H "Content-Type: application/json" -d '{

"model": "meta/llama-3.1-8b-instruct",

"messages": [{"role": "user", "content": "Explain GPU memory bandwidth in simple terms."}]

}'With 128GB of unified memory, the DGX Spark can handle much larger models too. Try Llama 3.1 70B for a more capable assistant, or experiment with multimodal models that process both text and images.

Fine-Tuning a Model

The DGX Spark’s unified memory architecture makes fine-tuning practical on models up to ~70B parameters. Here’s a basic workflow using NVIDIA NeMo:

# Install NeMo Framework

pip install nemo_toolkit[all]

# Download a base model and your training data

# Configure fine-tuning parameters

# Launch training, the unified memory lets you fine-tune

# without the typical GPU memory constraintsThe key advantage of DGX Spark for fine-tuning is the 128GB unified memory. On traditional GPU setups, you’d need to use parameter-efficient techniques (LoRA, QLoRA) to fit a 70B model. On DGX Spark, the unified CPU+GPU memory means more of the model can be kept in fast-access memory during training.

High-Speed Networking

The DGX Spark includes ConnectX-7 with two QSFP ports supporting 200GbE. This enables:

- Multi-Spark clusters: Connect multiple DGX Spark units for distributed training

- Cloud bursting: Seamlessly move workloads between local and cloud infrastructure

- High-speed data transfer: Load large datasets from network storage at wire speed

Tips for Best Performance

- Use NVMe storage (1TB or 4TB option) for model weights and datasets, the internal SSD is much faster than network-mounted storage for AI workloads

- For maximum inference throughput, use TensorRT to optimize your models before deployment

- Monitor power consumption and thermal status via

nvidia-smi, the 240W power budget is shared between GPU and CPU compute - Use the NGC catalog for pre-optimized containers rather than building from source

Next Steps

Your DGX Spark is now a personal AI development powerhouse. Explore the NVIDIA NGC catalog for hundreds of pre-optimized AI models and containers, join the NVIDIA Developer Program for additional resources, and start building.

Need help with your DGX Spark setup or AI development workflow? Contact us for technical support, team deployment, and training recommendations.