NVIDIA CUDA Libraries Explained: cuDNN, TensorRT, Triton, and When to Use Each

NVIDIA’s AI software ecosystem is powerful but sprawling. With names like cuDNN, TensorRT, Triton, NCCL, cuBLAS, and RAPIDS, it’s easy to get lost. This guide cuts through the confusion and explains each library’s role, when to use it, and how they work together.

The Big Picture

Think of NVIDIA’s software stack as layers:

- CUDA Toolkit, The foundation. GPU programming model and compiler.

- Math Libraries, Optimized building blocks (cuBLAS, cuFFT, cuSPARSE).

- Domain Libraries, AI-specific acceleration (cuDNN, TensorRT, NCCL).

- Application Frameworks, End-to-end solutions (Triton, DeepStream, NeMo).

Most AI developers work at layers 3 and 4. You only drop to layers 1-2 when building custom operations or optimizing performance-critical paths.

cuDNN: The Deep Learning Primitives Library

What it does: Provides GPU-optimized implementations of fundamental deep learning operations, convolutions, recurrent neural networks, normalization, activation functions, and attention mechanisms.

When to use it: You usually don’t call cuDNN directly. PyTorch, TensorFlow, and other frameworks use cuDNN under the hood for their GPU operations. Install it to make your framework faster, but you rarely write cuDNN code yourself.

Key insight: When NVIDIA releases a new cuDNN version, your existing PyTorch code gets faster without any code changes. This is why keeping cuDNN updated matters.

TensorRT: The Inference Optimizer

What it does: Takes a trained model (from PyTorch, TensorFlow, or ONNX) and optimizes it for maximum inference speed on NVIDIA GPUs. It fuses layers, eliminates redundant operations, selects optimal precision (FP32/FP16/INT8/FP4), and generates a hardware-specific execution plan.

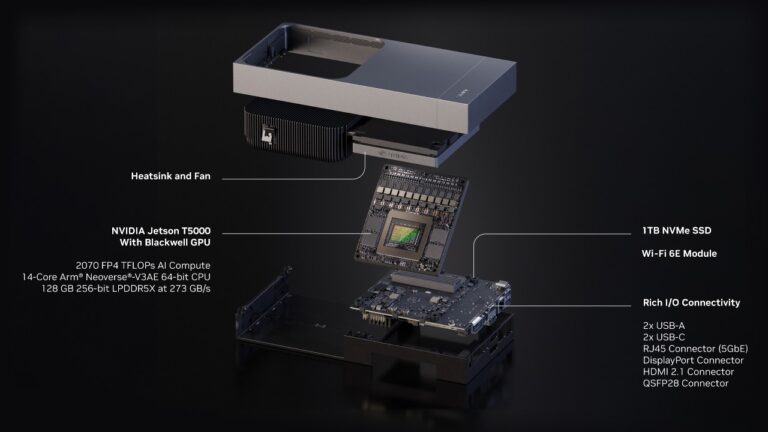

When to use it: Whenever you’re deploying a model for production inference. TensorRT typically delivers 2-5x speedup over running the same model in PyTorch or TensorFlow. It’s especially impactful on Jetson devices where every millisecond of latency and every watt of power matters.

Key insight: TensorRT engines are hardware-specific, an engine built for H100 won’t run on Jetson Orin. You build the engine once per target platform.

Triton Inference Server: Production Model Serving

What it does: A production-grade inference serving platform that manages model loading, batching, scheduling, and scaling. It supports multiple model formats (TensorRT, ONNX, PyTorch, TensorFlow) and provides a standardized REST/gRPC API for inference requests.

When to use it: When you need to serve AI models in production, especially when managing multiple models, handling concurrent requests, or scaling across multiple GPUs. Triton handles dynamic batching (combining multiple small requests into efficient GPU batches) automatically.

Key insight: TensorRT optimizes the model itself; Triton optimizes everything around the model, request routing, batching, GPU memory management, and model versioning. Use both together for maximum production performance.

NCCL: Multi-GPU Communication

What it does: NVIDIA Collective Communications Library provides optimized multi-GPU and multi-node communication primitives, all-reduce, all-gather, broadcast, and reduce-scatter operations that distributed AI training depends on.

When to use it: Any time you’re training on more than one GPU. PyTorch’s DistributedDataParallel and DeepSpeed both use NCCL under the hood. Like cuDNN, you rarely call it directly, but having the right version installed is critical for multi-GPU training performance.

cuBLAS and cuBLASLt: Linear Algebra

What it does: GPU-optimized basic linear algebra operations, matrix multiplication, vector operations, and batched GEMM. cuBLASLt adds flexible API for mixed-precision and Tensor Core operations.

When to use it: Framework-level developers and researchers building custom layers. Most AI practitioners benefit indirectly, cuBLAS powers the matrix multiplications inside every neural network layer.

RAPIDS: Data Science on GPU

What it does: GPU-accelerated data science libraries that mirror familiar Python tools, cuDF (like pandas), cuML (like scikit-learn), cuGraph (graph analytics). Data processing that takes hours on CPU can complete in minutes on GPU.

When to use it: Data preprocessing, feature engineering, and traditional ML workflows. RAPIDS is transformative for data science teams who spend most of their time on data preparation rather than model training.

DeepStream: Video Analytics Pipeline

What it does: A streaming analytics framework for building AI-powered video pipelines. It handles video decode, preprocessing, inference (via TensorRT), tracking, and analytics in a single optimized pipeline.

When to use it: Multi-camera video analytics, traffic monitoring, retail analytics, and any application that processes video streams with AI. DeepStream can process dozens of concurrent video streams on a single GPU.

How They Work Together

A typical production AI pipeline uses multiple libraries:

- Training: PyTorch + cuDNN + NCCL (multi-GPU) + cuBLAS

- Optimization: Export to ONNX → TensorRT engine

- Serving: Triton Inference Server loads the TensorRT engine

- Data pipeline: RAPIDS for preprocessing → Triton for inference

- Video pipeline: DeepStream for decode → TensorRT for inference

The Bottom Line

You don’t need to master every library. For most AI practitioners: use PyTorch for training (it calls cuDNN and NCCL automatically), TensorRT for inference optimization, and Triton for production serving. Add RAPIDS if data preprocessing is a bottleneck, and DeepStream if you’re building video analytics.

Need help navigating the NVIDIA software stack for your project? Contact us for architecture guidance and optimization support.