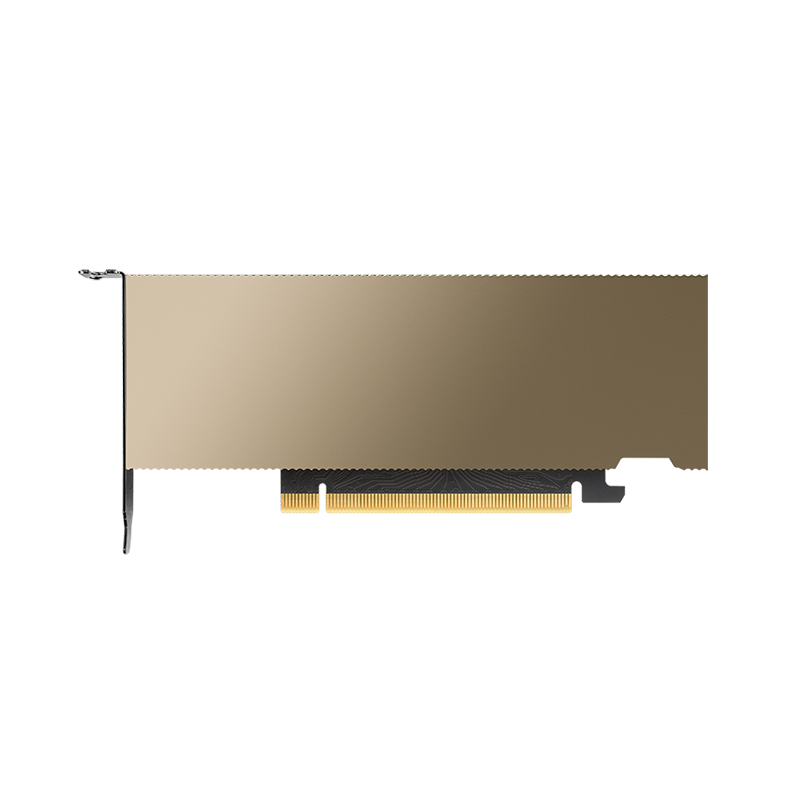

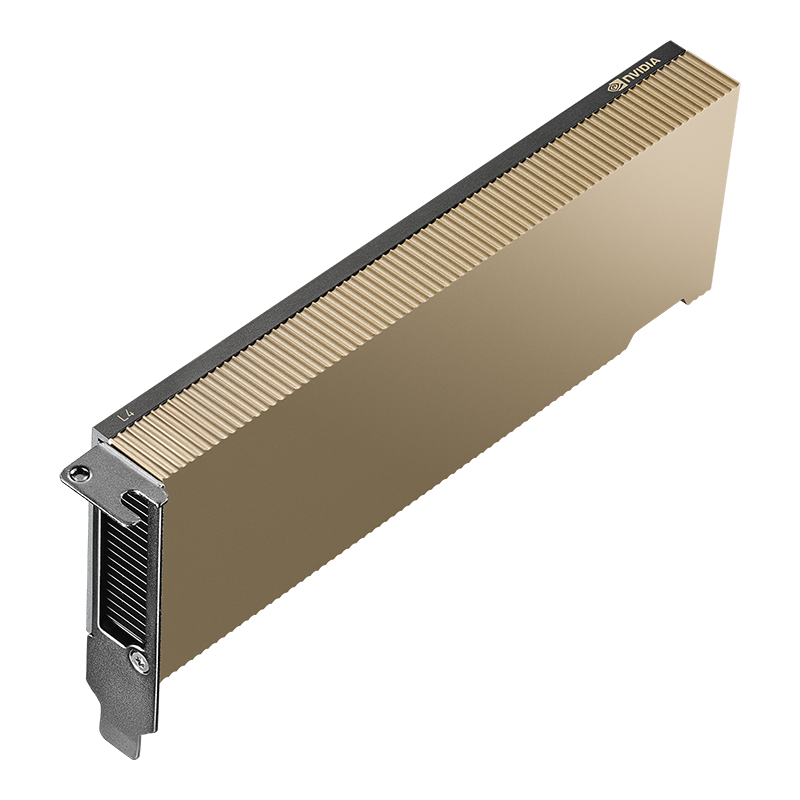

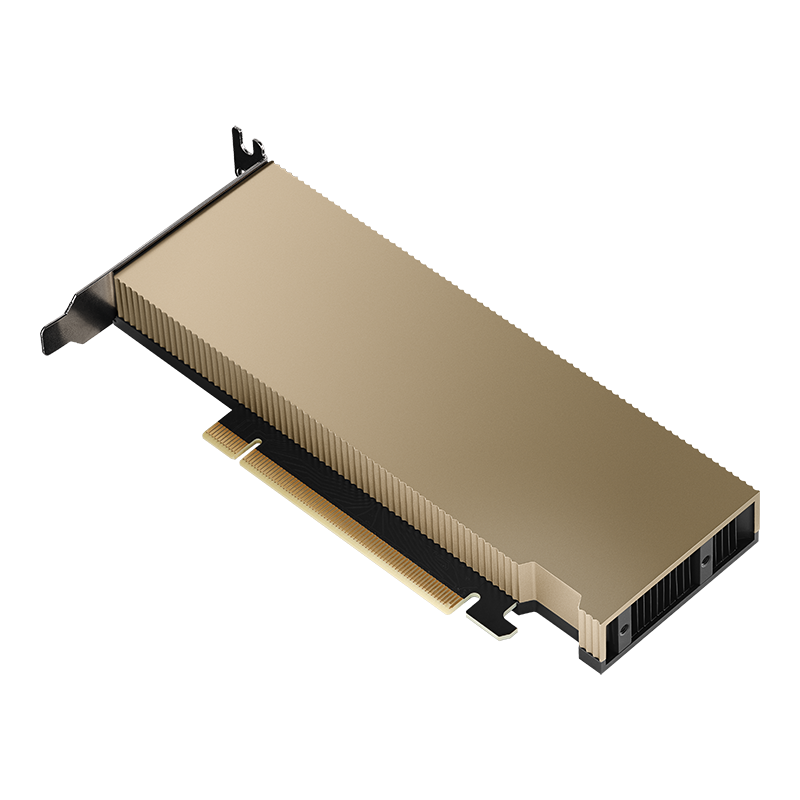

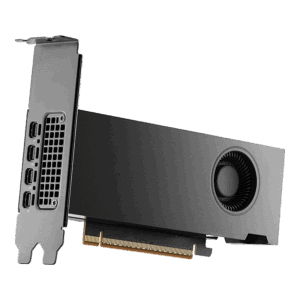

NVIDIA L4

Ada Lovelace inference accelerator with 24 GB memory in a compact 72W low-profile, single-slot form factor. Delivers universal acceleration for AI inference, video, and graphics at the edge and in the cloud.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

The NVIDIA L4 is the most versatile and energy-efficient data center GPU for AI inference. Built on the Ada Lovelace architecture, it delivers 485 TFLOPS of FP8 Tensor Core performance with 24GB of GDDR6 memory — all within a 72W, single-slot, low-profile form factor that fits in any standard server.

With fourth-generation Tensor Cores, third-generation RT Cores, and dedicated hardware encoders/decoders, the L4 accelerates the broadest range of AI inference, video transcoding, and graphics workloads at the lowest power per inference in the data center.

Key Features

- 485 TFLOPS FP8: High-throughput AI inference at ultra-low power

- 30.3 TFLOPS FP32: Strong compute for rendering and simulation

- 24GB GDDR6: Ample memory for most inference models

- 72W — No External Power: Runs from PCIe slot power in any server

- Single-Slot Low-Profile: Universal server compatibility

- Hardware Video: 2x NVENC + 4x NVDEC for AI video pipelines

Technical Specifications

| Specification | Details |

|---|---|

| GPU Architecture | NVIDIA Ada Lovelace |

| Memory | 24 GB GDDR6 |

| Memory Bandwidth | 300 GB/s |

| FP32 | 30.3 TFLOPS |

| TF32 Tensor | 120 TFLOPS (Sparse) |

| FP16/BF16 Tensor | 242 TFLOPS (Sparse) |

| FP8 Tensor | 485 TFLOPS (Sparse) |

| INT8 Tensor | 485 TOPS (Sparse) |

| TDP | 72W |

| Interface | PCIe Gen4 x16 |

| Form Factor | Single-slot, low-profile, passive cooling |

Ideal Use Cases

- AI inference at scale — deploy 1 to 8 per server for maximum density

- AI video transcoding and real-time video analytics

- Cloud AI services and inference-as-a-service platforms

- Edge data center AI where power and space are constrained

- Virtual desktop and cloud gaming infrastructure

Why Choose This Product?

The L4 delivers the best inference-per-watt in the NVIDIA data center portfolio. At 72W with no external power required, it transforms any server into an AI inference platform. For organizations deploying AI inference at scale across thousands of servers, the L4’s efficiency and universal compatibility make it the foundation of cost-effective AI infrastructure.

Interested? Contact us for server recommendations, multi-GPU density planning, and volume pricing.

Reviews

There are no reviews yet.