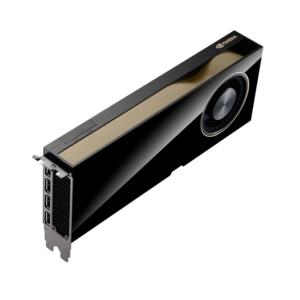

NVIDIA H100 SXM

The GPU that launched the AI era — 80GB HBM3, 3.35 TB/s bandwidth, 3,958 TFLOPS FP8, NVLink 900 GB/s in an SXM form factor for HGX and DGX systems.

🚀 Express Shipping Available Across Europe & MENA

- Full Insurance on All Shipments

- Tracked Delivery & Real-Time Updates

Overview

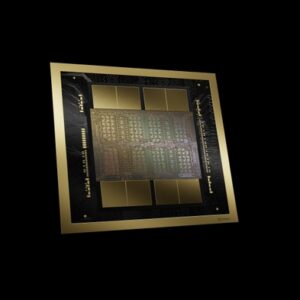

The NVIDIA H100 SXM is the GPU that ignited the generative AI revolution. Built on the NVIDIA Hopper architecture, it delivers 3,958 TFLOPS of FP8 Tensor Core performance with 80GB of HBM3 memory at 3.35 TB/s bandwidth. As the compute engine behind DGX H100 and HGX H100 systems, it remains one of the most widely deployed data center GPUs for AI training and inference at scale.

The H100 introduced the Transformer Engine with FP8 precision support, Multi-Instance GPU (MIG) technology for up to 7 isolated GPU instances, and fourth-generation NVLink at 900 GB/s — setting the foundation for modern AI infrastructure.

Key Features

- 3,958 TFLOPS FP8: Fourth-generation Tensor Cores with Transformer Engine

- 80GB HBM3: 3.35 TB/s memory bandwidth for large model training

- NVLink 900 GB/s: Fourth-generation NVLink for multi-GPU scaling

- MIG: Up to 7 isolated GPU instances for multi-tenant workloads

- 67 TFLOPS FP32: Strong HPC and scientific computing performance

- Confidential Computing: Hardware-based security for sensitive AI workloads

Technical Specifications

| Specification | Details |

|---|---|

| GPU Architecture | NVIDIA Hopper |

| Memory | 80 GB HBM3 |

| Memory Bandwidth | 3.35 TB/s |

| FP32 | 67 TFLOPS |

| FP64 Tensor | 67 TFLOPS |

| TF32 Tensor | 989 TFLOPS |

| FP16/BF16 Tensor | 1,979 TFLOPS |

| FP8 Tensor | 3,958 TFLOPS |

| INT8 Tensor | 3,958 TOPS |

| TDP | Up to 700W (configurable) |

| NVLink | 900 GB/s (4th Gen) |

| PCIe | Gen5 128 GB/s |

| MIG | Up to 7 instances (10GB each) |

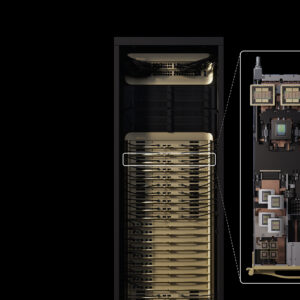

| Form Factor | SXM |

Ideal Use Cases

- Large-scale AI model training — LLMs, diffusion models, multimodal AI

- High-throughput AI inference for production deployments

- High-performance computing (HPC) and scientific simulation

- Multi-tenant AI platforms via MIG partitioning

Why Choose This Product?

The H100 SXM is the most proven and widely deployed AI training GPU in the world. With a massive installed base, mature software ecosystem, and extensive optimization across every major AI framework, it remains the reliable choice for organizations building production AI infrastructure.

Interested? Contact us for HGX H100 configurations, pricing, and deployment support.

Reviews

There are no reviews yet.